The Fast Web

Over the past few months I’ve made a few changes to my blog, prompted by this comment on Lobste.rs. This part really got me going: “Definitely feels more like “underperforming CMS with database” than static site.”

Since then, I have:

- Moved from DigitalOcean Kubernetes to Vercel

- Updated the theme to the latest release

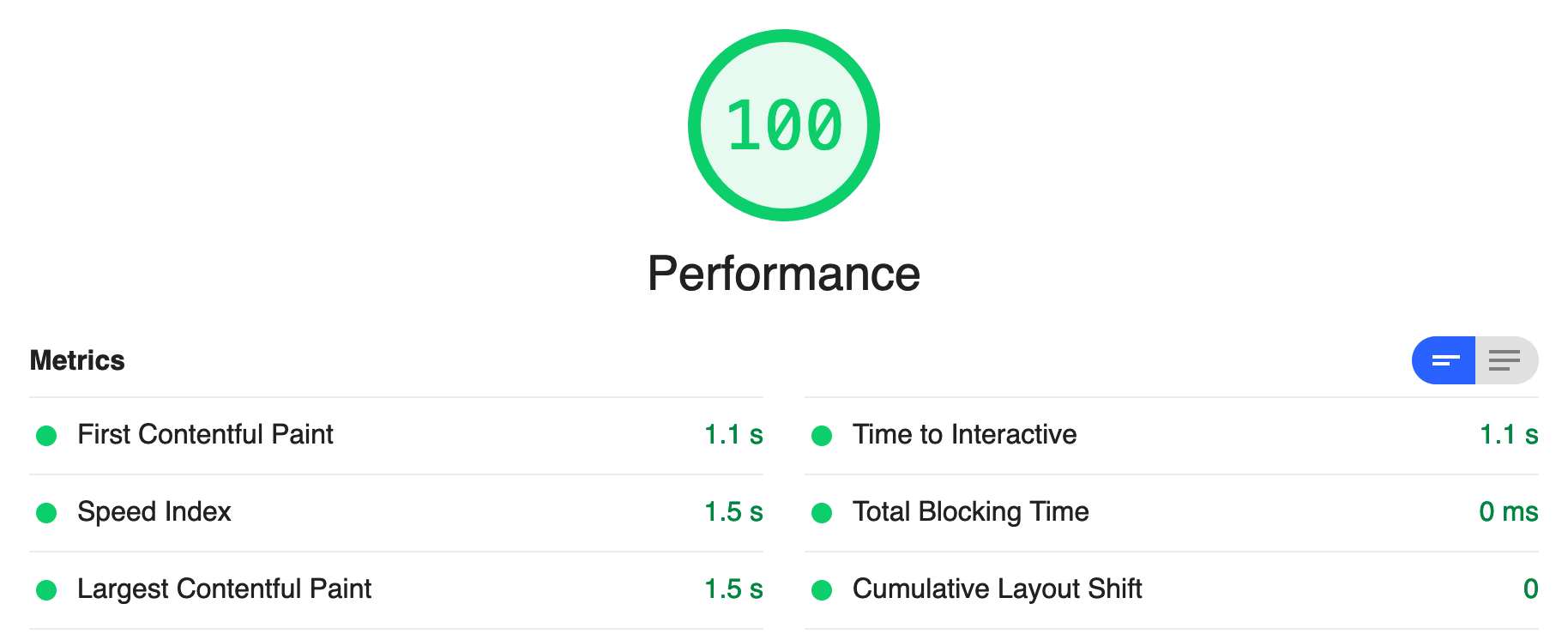

- Made some generic performance improvements, based on Lighthouse reports

Most of this has been to reduce load time, so that the site should load instantly.

As much as I love messing around with Kubernetes and having a complicated pipeline to deploy changes to my blog, there’s quite a few downsides:

- The complicated pipeline

- Unnecessarily large container images. Despite this being a static site, if I’m using Kubernetes, I still need to use a container to serve the files. I’m using nginx to do this, which also lets me do some fancy URL rerouting. Ultimately, this means requests are routed via a load balancer, to an nginx ingress deployment, and then to another nginx container that serves static files. That’s a lot of effort!

- A more complex process means I focus less on content. You’ll notice I only managed three posts last year.

- My Kubernetes nodes are hosted in a single data centre in Singapore, so content isn’t available at the edge. Some visitors will inevitably experience poor load times.

Vercel is quite the opposite by comparison:

- Simple pipeline. All I had to do was log into Vercel with GitHub and click a few buttons to set up a new deployment. It worked out I’m using hugo on its own, and there was zero configuration. I now get a free deployment for every commit, and even pull request previews.

- Build times are astonishingly fast. Where I used to have to wait up to 4 minutes for a build, I now wait roughly 30 seconds.

- Edge caching. Vercel deployments get pushed out along their edge network. Regardless of where a visitor is, this blog should load quickly.

So, what do you think of the load times? Is there anything else I should change? Let me know via Twitter, Mastodon, or email

On the web

More confessions from a FOSS enthusiast

Thu Apr 16 2026 by joelchrono's blogI Wish I Could Talk to My Dad

Wed Apr 15 2026 by Kev QuirkShow Remaining Characters in a Filament Text Input

Sat Apr 4 2026 by stefanzweifel.devBuilding a Runtime with QuickJS

Thu Mar 26 2026 by Andrew Healey's BlogBuilding a self-hosted cloud coding agent

Tue Feb 3 2026 by Stan's blog

Generated by openring